UPDATE (2020-02-16): Reports are coming in that this doesn’t work with the newest Adobe CC programs since they’re rotating the logs now. If this doesn’t work for you, it may be best to run a temporary file cleanup tool daily, such as Disk Cleanup included in Windows, CCleaner (warning: since Avast bought it, it’s a bit spammy now), or similar. Alternatively, if you are handy with such things, write a batch file that deletes the log files and add it to Task Scheduler as a task to run when you normally won’t be active on the machine.

UPDATE (2019-10-21): Adobe’s support has noticed this post and is attempting to have the bug behind this issue fixed. See the comments!

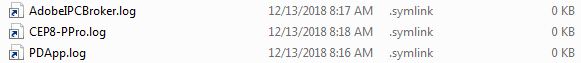

I recently discovered that Adobe’s Creative Cloud software left a massive pile of PDApp.log files in my temporary directories, as well as a few others such as CEP8-PPro.log and AdobeIPCBroker.log. These were taking up quite a bit of space, and I’ve looked up PDApp.log only to discover that some people have had serious issues with PDApp.log consuming all available free space on their drives after a while. One user reported having a 600GB log file! Needless to say, several people have asked how they can control these log files, but as usual, Adobe support and forum users offered no actual solutions.

I’m here with your solution!

On Windows, you’ll need to open Task Manager and kill all Adobe processes to unlock the log files (not just stuff starting with Adobe, but also Creative Cloud processes and any node.exe instances they started) then open an administrative command prompt and type the following two commands:

cd %temp% del PDApp.log mklink PDApp.log NUL:

This goes to your temporary directory, deletes the PDApp.log file (if you get an “in use” error here you missed an Adobe process in Task Manager), and creates a file symbolic link to a special device called NUL: which is literally the “nothing” device. When the Adobe apps write to PDApp.log now, all writes will succeed (no errors) but the data will simply be discarded. You can repeat the delete/mklink process for any other Adobe logs you don’t want around anymore. Best of all, because symlinks on Windows require admin privileges to modify, the Adobe apps won’t rotate these fake log files out! Be aware that cleanup tools like CCleaner or Disk Cleanup may delete these links, so you may need to repeat these steps if you delete your temp folder contents with a cleaning tool. You may want to write a small batch file to run the commands in one shot if you like to delete temp files frequently.

On Mac OS (note: I haven’t tested this myself, but it should work) you should be able to kill all Adobe processes with Activity Monitor, then open Terminal, then type this:

ln -sf /dev/null ~/Library/Logs/PDApp.log

Linux/UNIX administrators will recognize this as the classic “redirect to /dev/null” technique that we all know and love. Since I have no way to test this, Adobe may rotate these links out, but you can use this command to lock down the symlink if it does:

sudo chown -h root:wheel ~/Library/Logs/PDApp.log

This will ask for your account password since it requires privilege escalation. This command makes the link owned by “root” which means normal user programs can’t rename or delete it, though they can still write through the link, so the trick will continue to work.

UPDATE: Some have asked what the purpose of the PDApp.log file is and whether it’s safe to do this. The answers are, respectively, “logs information for troubleshooting Creative Cloud installation problems” and “yes, absolutely.” If you’re not having installation issues, this log is just taking up space and wearing out your SSD. If you need to “re-enable it” it’s as simple as deleting the “decoy” links you made with these directions which will allow the logs to be created as if nothing ever happened.